Yudi Shi, Shangzhe Di, Qirui Chen, Qinian Wang,

Xiaolong Jiang, Yao Hu, Weidi Xie

CVPR 2026 Findings

project page / arXiv

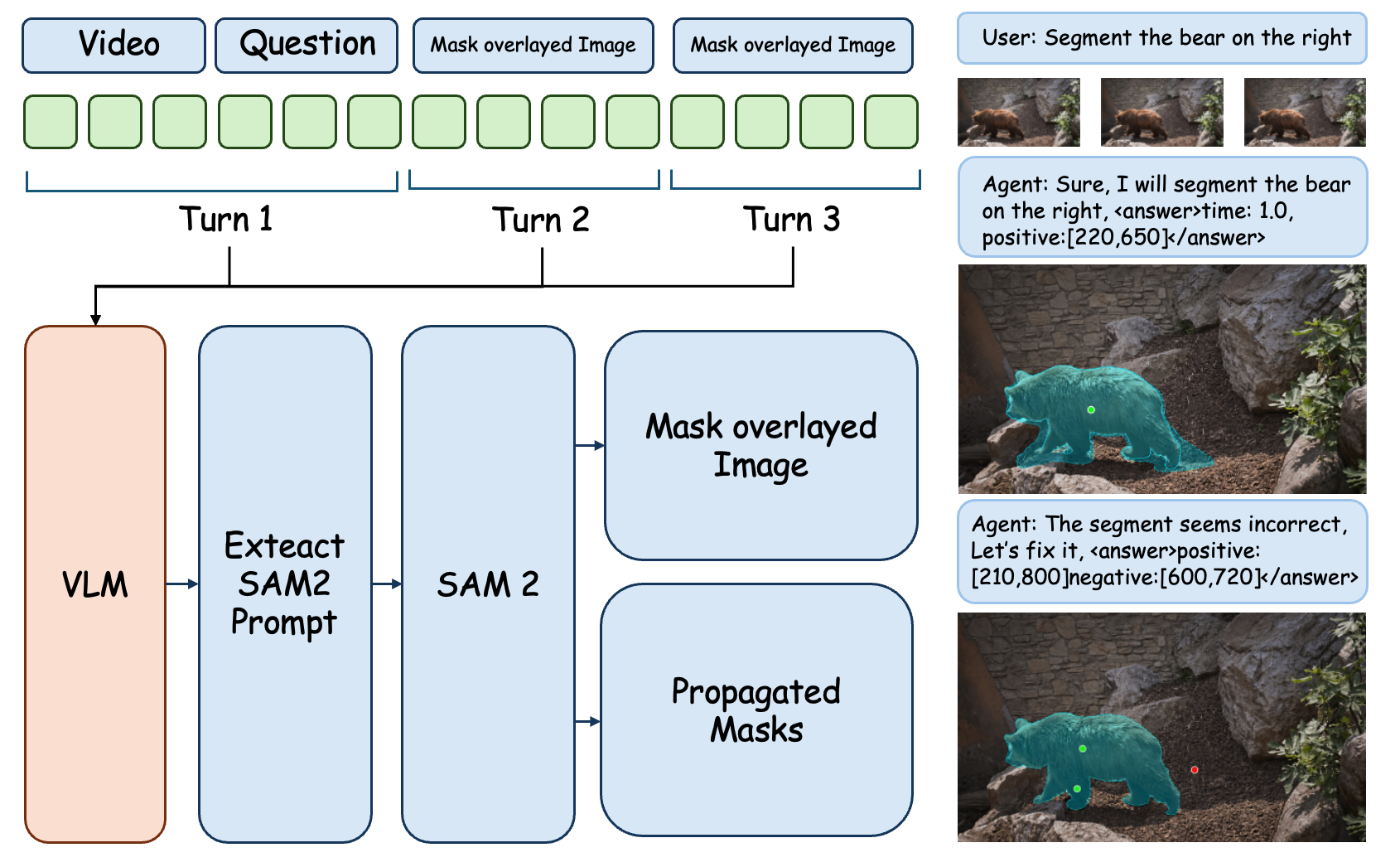

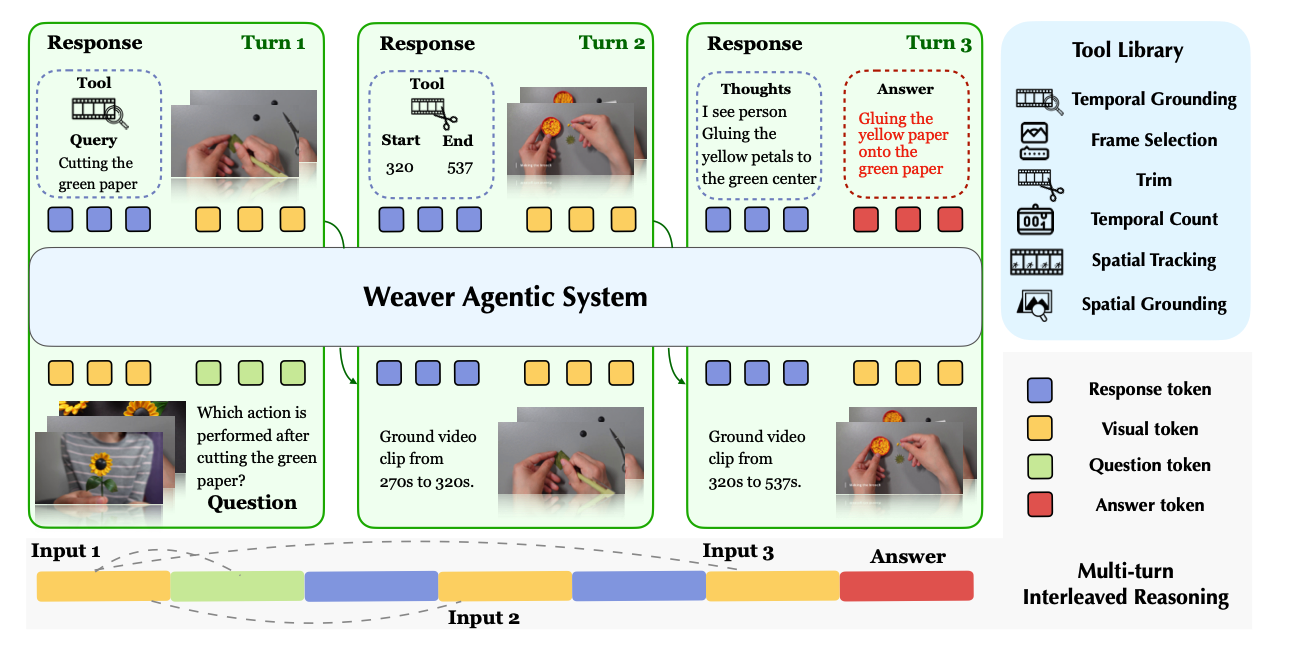

An end-to-end trainable multimodal reasoning agentic system called Weaver addresses the limitations of text-centric Chain-of-Thought in video reasoning by dynamically invoking tools and leveraging reinforcement learning